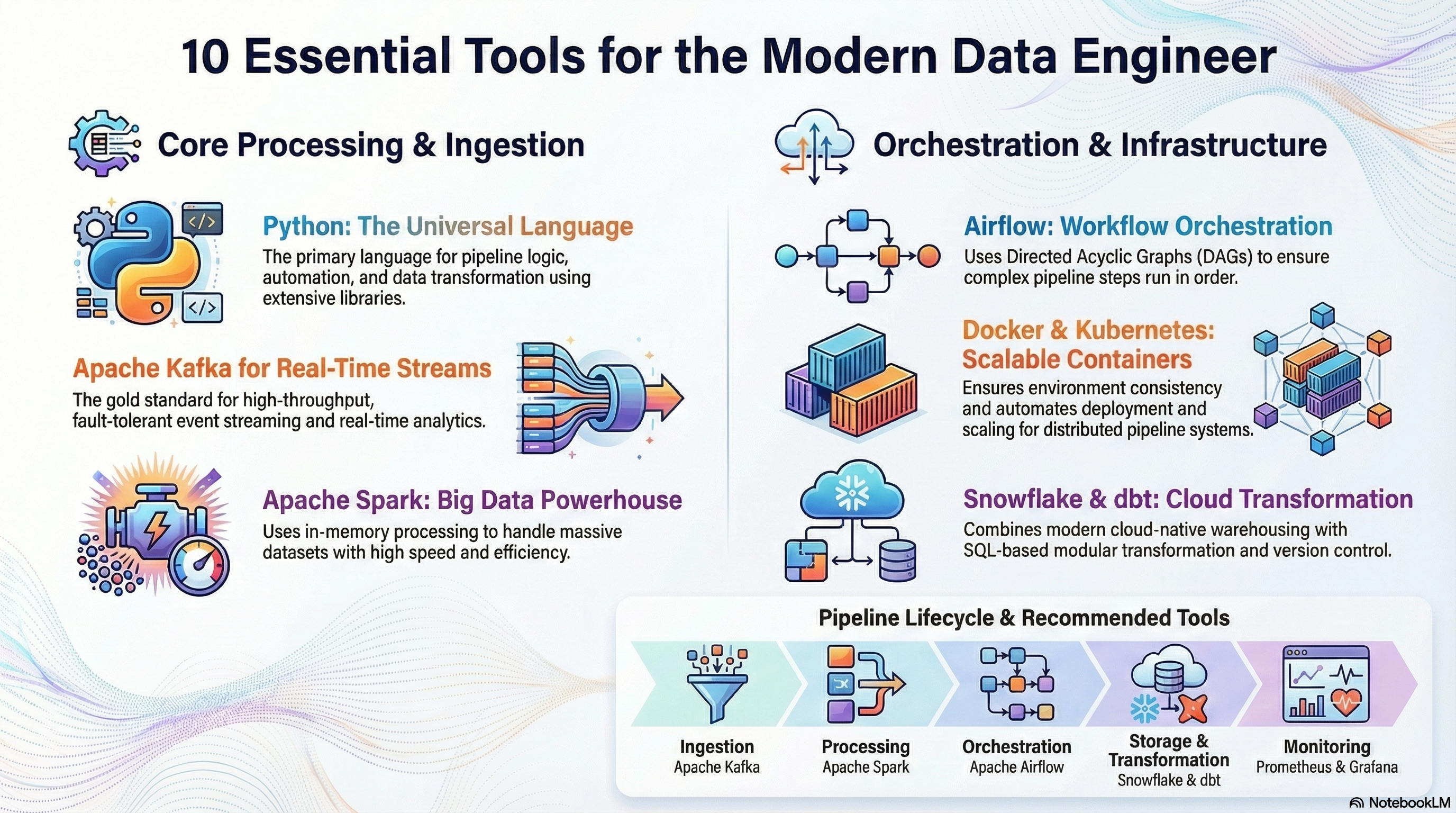

In today’s data-driven world, organizations rely heavily on robust data pipelines to move, transform, and analyze data efficiently. Whether you’re building batch processing systems or real-time streaming architectures, having the right tools can make or break your workflow.

This guide walks you through 10 essential tools every data engineer should know to design, build, and maintain scalable data pipelines. These tools cover everything from ingestion and orchestration to monitoring and storage.

Table of Contents

Toggle1. Apache Kafka – The Backbone of Real-Time Data

Apache Kafka has become the gold standard for real-time data streaming. It allows you to publish, subscribe to, and process streams of records in real time.

Why it matters:

- Handles high-throughput data streams

- Enables event-driven architectures

- Fault-tolerant and scalable

Use case: Real-time analytics, log aggregation, and event streaming.

2. Apache Airflow – Workflow Orchestration Made Easy

Apache Airflow is widely used for scheduling and orchestrating complex data workflows.

Key features:

- DAG-based pipeline design

- Strong community support

- Easy integration with cloud services

Why you need it: Pipelines often involve multiple steps Airflow ensures everything runs in the right order, at the right time.

3. Python – The Universal Language of Data Engineering

Python is the most popular language in data engineering due to its simplicity and powerful ecosystem.

Why Python stands out:

- Extensive libraries (Pandas, PySpark)

- Easy to learn and write

- Strong community support

Use case: Data transformation, scripting, automation, and pipeline logic.

4. Apache Spark – Big Data Processing Powerhouse

Apache Spark is designed for large-scale data processing and analytics.

Highlights:

- In-memory processing for speed

- Supports batch and streaming

- Works well with big data ecosystems

Best for: Processing massive datasets quickly and efficiently.

5. Docker – Containerization for Consistency

Docker allows you to package applications and dependencies into containers.

Why it’s essential:

- Ensures consistency across environments

- Simplifies deployment

- Improves scalability

Use case: Running pipelines reliably across development, testing, and production.

6. Kubernetes – Orchestrating Containers at Scale

Kubernetes helps manage and scale containerized applications.

Key benefits:

- Automated scaling and deployment

- Self-healing systems

- Resource optimization

Why data engineers use it: For managing complex, distributed pipeline systems in production.

7. AWS Glue – Serverless ETL Service

AWS Glue is a fully managed extract, transform, load (ETL) service.

Advantages:

- Serverless (no infrastructure management)

- Seamless integration with AWS ecosystem

- Automated schema discovery

Use case: Building ETL pipelines quickly in cloud environments.

8. Snowflake – Modern Data Warehousing

Snowflake is a cloud-native data platform designed for scalability and performance.

Why it’s popular:

- Separates compute and storage

- Handles structured and semi-structured data

- High concurrency support

Use case: Storing and querying large datasets efficiently.

9. dbt (Data Build Tool) – Transformations Done Right

dbt enables data engineers and analysts to transform data within the warehouse.

Key features:

- SQL-based transformations

- Version control integration

- Testing and documentation built-in

Why it matters: Makes transformations more modular, testable, and maintainable.

10. Prometheus & Grafana – Monitoring and Observability

Monitoring is crucial for maintaining healthy pipelines.

Prometheus and Grafana are often used together.

What they offer:

- Real-time metrics collection

- Custom dashboards

- Alerting capabilities

Use case: Tracking pipeline performance, detecting failures, and ensuring reliability.

Putting It All Together

A modern data pipeline is rarely built with just one tool. Instead, it’s a combination of technologies working together:

- Ingestion: Kafka

- Processing: Spark

- Orchestration: Airflow

- Storage: Snowflake

- Transformation: dbt

- Deployment: Docker + Kubernetes

- Monitoring: Prometheus + Grafana

This ecosystem allows data engineers to build pipelines that are scalable, reliable, and efficient.

Choosing the Right Stack

Not every project requires all these tools. Your choices should depend on:

- Data volume: Small vs big data

- Latency needs: Batch vs real-time

- Cloud vs on-premise

- Team expertise

For example:

- A startup might rely on Python + Airflow + Snowflake

- A large enterprise may use Kafka + Spark + Kubernetes

Final Thoughts

Data engineering is evolving rapidly, and tools are becoming more powerful and accessible. Mastering these 10 tools will give you a strong foundation to build modern data pipelines that can handle real-world challenges.

Start small, experiment with a few tools, and gradually expand your stack as your needs grow. The key is not just knowing the tools but understanding how and when to use them together.