Table of Contents

ToggleIntroduction:

Large Language Models (LLMs) like ChatGPT, Gemini, and Claude are trained on massive datasets. However, the world keeps changing every day. New technologies, trends, and information are constantly emerging.

Continuous Learning helps LLMs stay updated by allowing them to learn from new data over time without losing their existing knowledge.

What is Continuous Learning?

Continuous Learning (also called Lifelong Learning) is a method where an AI model:

- Learns new information regularly

- Updates its knowledge over time

- Improves performance continuously

- Adapts to new domains and user needs

Instead of training the model once and stopping, continuous learning allows the model to evolve.

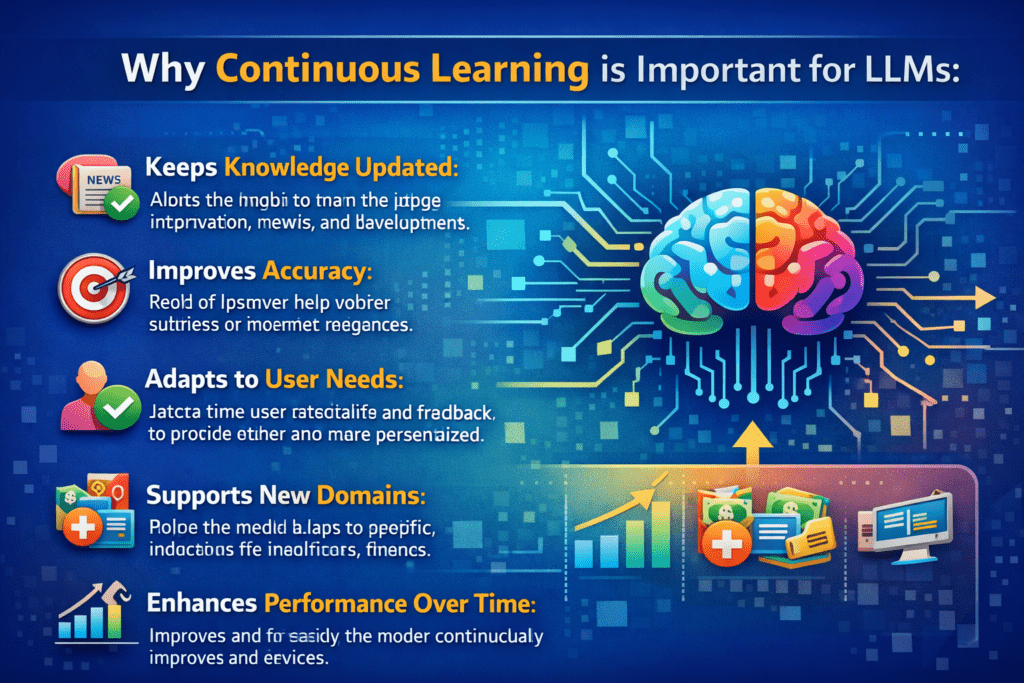

Why Continuous Learning is Important for LLMs:

1.Keeps Knowledge Updated

New events, technologies, and information can be added.

2.Improves Accuracy

Models learn from recent data and reduce outdated responses.

3.Adapts to User Behavior

Helps personalize and improve user experience.

4.Supports New Domains

Can be updated for healthcare, finance, education, etc.

How Continuous Learning Works:

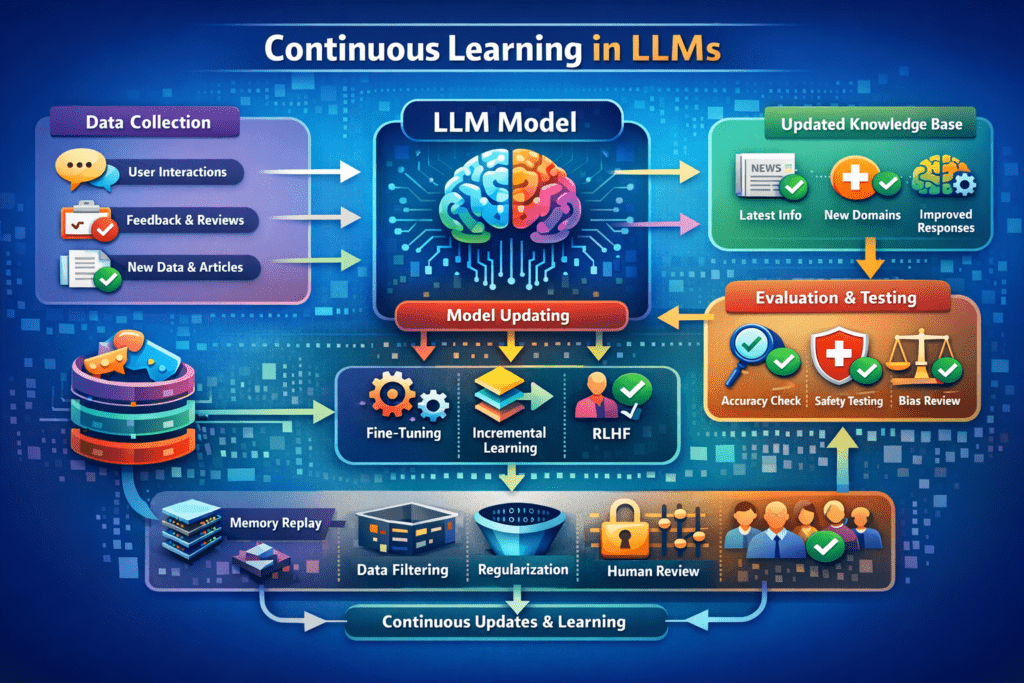

1. Data Collection

New data is collected from:

- User interactions

- Feedback

- New documents or datasets

2. Model Updating

The model is updated using:

- Fine-tuning with new data

- Incremental training

- Reinforcement Learning from Human Feedback (RLHF)

3. Evaluation

After updating, the model is tested to ensure:

- Accuracy is improved

- No performance drop

- No harmful or biased output

Techniques Used in Continuous Learning:

1. Incremental Learning

The model learns small batches of new data without retraining from scratch.

2. Fine-Tuning

The existing model is retrained with domain-specific or recent data.

3. Reinforcement Learning (RLHF)

Human feedback is used to improve model responses.

4. Retrieval-Augmented Generation (RAG)

Instead of retraining, the model retrieves updated information from external databases.

Challenges in Continuous Learning:

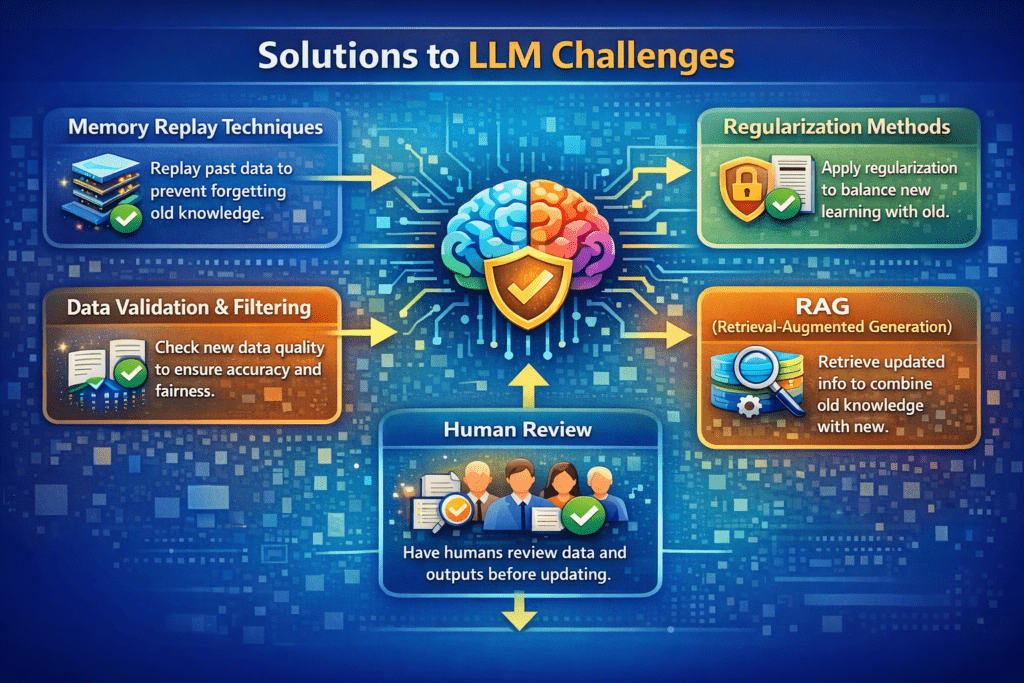

1. Catastrophic Forgetting

When the model learns new data, it may forget old knowledge.

2. Data Quality Issues

Poor or biased new data can affect performance.

3. High Computational Cost

Frequent retraining requires powerful hardware.

4. Security Risks

Malicious data may manipulate the model.

Solutions to Challenges:

1.Use memory replay techniques

2.Apply regularization methods

3.Filter and validate new data

4.Use human review before updating

5.Combine with RAG instead of full retraining

Real-World Applications:

1.Customer support chatbots

2.News and trend analysis systems

3.Healthcare diagnosis assistants

4.Financial advisory tools

5.Personalized learning platforms

Continuous Learning vs Traditional Training:

| Feature | Traditional LLM | Continuous Learning LLM |

|---|---|---|

| Training | One-time | Ongoing |

| Knowledge | Static | Updated regularly |

| Adaptability | Low | High |

| Performance Over Time | Decreases | Improves |

Future of Continuous Learning:

Continuous learning will make LLMs:

- More accurate

- More personalized

- Real-time adaptive

- Industry-specific

- Safer and more reliable

It is a key step toward building intelligent and evolving AI systems.

Conclusion:

Continuous Learning allows Large Language Models to grow and adapt in a fast-changing world. By updating knowledge regularly and learning from new data, LLMs become more accurate, relevant, and useful for real-world applications. This approach is essential for the future of smart and adaptive AI systems.