In today’s data-driven world, businesses no longer want to wait hours or even minutes for insights. Real-time analytics pipelines enable organizations to process and analyze data as it’s generated, unlocking faster decisions, improved customer experiences, and competitive advantages.

Whether you’re tracking user activity, monitoring systems, or detecting fraud, building a real-time analytics pipeline is a powerful capability. This guide walks you through the concepts, architecture, tools, and best practices needed to design and implement one effectively.

Table of Contents

ToggleWhat Is a Real-Time Analytics Pipeline?

A real-time analytics pipeline is a system that ingests, processes, and analyzes data continuously as it flows from source to destination, delivering insights with minimal latency (often seconds or less).

Key characteristics:

- Low latency: Data is processed almost instantly.

- Continuous flow: No batching delays.

- Scalability: Handles large volumes of streaming data.

- Fault tolerance: Recovers gracefully from failures.

Why Real-Time Analytics Matters

Real-time pipelines are critical for use cases where timing is everything:

- Fraud detection: Identify suspicious transactions instantly.

- Recommendation engines: Personalize user experience in real time.

- Operational monitoring: Detect system anomalies immediately.

- IoT analytics: Process sensor data on the fly.

- Financial trading: Act on market changes in milliseconds.

Business impact:

- Faster decision-making

- Improved customer engagement

- Reduced risk and downtime

Core Components of a Real-Time Pipeline

A typical real-time analytics pipeline consists of several key layers:

1. Data Sources

These are the origins of your data:

- Web/mobile applications

- IoT devices

- Logs and events

- Databases

2. Data Ingestion Layer

This layer collects and streams data into the pipeline.

Common tools:

- Apache Kafka

- Amazon Kinesis

- Google Pub/Sub

Responsibilities:

- Buffer incoming data

- Ensure durability

- Handle high-throughput ingestion

3. Stream Processing Layer

This is where real-time transformations and analytics happen.

Popular frameworks:

- Apache Flink

- Apache Spark Streaming

- Apache Storm

Tasks performed:

- Data filtering and cleansing

- Aggregations

- Windowing (time-based analysis)

- Enrichment (joining external data)

4. Storage Layer

Processed data needs to be stored for querying and analysis.

Options include:

- Data warehouses (e.g., Snowflake)

- NoSQL databases (e.g., Apache Cassandra)

- Search engines (e.g., Elasticsearch)

5. Visualization & Consumption Layer

This is where insights are delivered to users or systems.

Tools:

- Tableau

- Power BI

- Custom dashboards and APIs

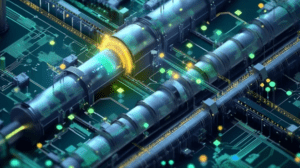

Architecture Overview

A simplified real-time pipeline looks like this:

Data Sources → Ingestion → Stream Processing → Storage → Visualization

Example flow:

- A user clicks a button on a website.

- Event is sent to Kafka.

- Stream processor aggregates clicks in real time.

- Data is stored in a fast database.

- Dashboard updates instantly.

Step-by-Step Guide to Building a Pipeline

Step 1: Define Use Case and Requirements

Start with clarity:

- What problem are you solving?

- What latency is acceptable (milliseconds vs seconds)?

- What scale of data do you expect?

Step 2: Choose the Right Tools

Your stack depends on your requirements.

Example stack:

- Ingestion: Kafka

- Processing: Flink

- Storage: Elasticsearch

- Visualization: Tableau

Tip:

Avoid over-engineering start simple and scale later.

Step 3: Set Up Data Ingestion

Configure your ingestion system:

- Create topics/streams

- Define partitions for scalability

- Ensure fault tolerance via replication

Step 4: Build Stream Processing Logic

This is the heart of your pipeline.

Implement:

- Data transformations

- Aggregations (e.g., count events per minute)

- Event-time processing

Example:

Count the number of user clicks every 10 seconds.

Step 5: Store Processed Data

Choose storage based on use case:

- Real-time dashboards → low-latency databases

- Historical analysis → data warehouse

Step 6: Create Dashboards

Use visualization tools to present insights:

- Real-time metrics

- Alerts and thresholds

- Interactive analytics

Step 7: Monitor and Optimize

Track:

- Latency

- Throughput

- Error rates

Use monitoring tools and alerts to ensure reliability.

Key Design Considerations

1. Latency vs Accuracy

Lower latency often means less processing time:

- Real-time → approximate results

- Batch → more accurate results

Balance based on your needs.

2. Scalability

Design for growth:

- Partition data streams

- Use distributed systems

- Scale horizontally

3. Fault Tolerance

Failures are inevitable.

Ensure:

- Data replication

- Checkpointing (e.g., in Flink)

- Automatic recovery

4. Data Consistency

Handle:

- Duplicate events

- Out-of-order data

Use techniques like:

- Idempotent processing

- Event-time ordering

Common Challenges

1. Handling Data Spikes

Sudden traffic increases can overwhelm systems.

Solution: Auto-scaling and buffering.

2. Debugging Streaming Systems

Real-time systems are harder to debug than batch systems.

Solution:

- Logging

- Replay mechanisms

- Monitoring dashboards

3. Schema Evolution

Data formats change over time.

Solution:

- Use schema registries

- Maintain backward compatibility

Best Practices

- Keep pipelines modular and loosely coupled

- Use schema validation

- Monitor everything

- Automate deployment with CI/CD

- Test with real-world scenarios

- Document your pipeline architecture

Real-World Example

Use Case: E-commerce Analytics

An online store wants real-time insights into user behavior.

Pipeline:

- User actions → Kafka

- Stream processing → Flink aggregates events

- Data stored → Elasticsearch

- Dashboard → Tableau shows live metrics

Outcome:

- Real-time sales tracking

- Instant anomaly detection

- Better marketing decisions

Future Trends in Real-Time Pipelines

- AI-driven pipelines: Automated anomaly detection

- Serverless streaming: Reduced infrastructure management

- Edge computing: Processing closer to data sources

- Unified batch + streaming systems

Final Thoughts

Building a real-time analytics pipeline may seem complex, but breaking it into clear components makes it manageable. Start with a focused use case, choose the right tools, and design for scalability and resilience from the beginning.

Real-time data isn’t just a technical upgrade it’s a strategic advantage. Organizations that invest in fast, reliable pipelines are better positioned to respond to change, delight users, and stay ahead of the competition.