Table of Contents

ToggleIntroduction:

Large Language Models (LLMs) like GPT, Claude, and other AI systems are transforming how businesses build intelligent applications. However, deploying an LLM is only the beginning. To ensure reliability, cost-efficiency, security, and performance, organizations need effective LLM Management.

This guide explains what LLM management is, why it matters, and the best practices to manage LLMs successfully.

What is LLM Management?

LLM Management refers to the process of monitoring, optimizing, securing, and maintaining large language models throughout their lifecycle.

It includes:

- Model selection and deployment

- Prompt management

- Performance monitoring

- Cost optimization

- Security and compliance

- Version control and updates

In simple terms, LLM management ensures your AI system works accurately, safely, and efficiently in real-world applications.

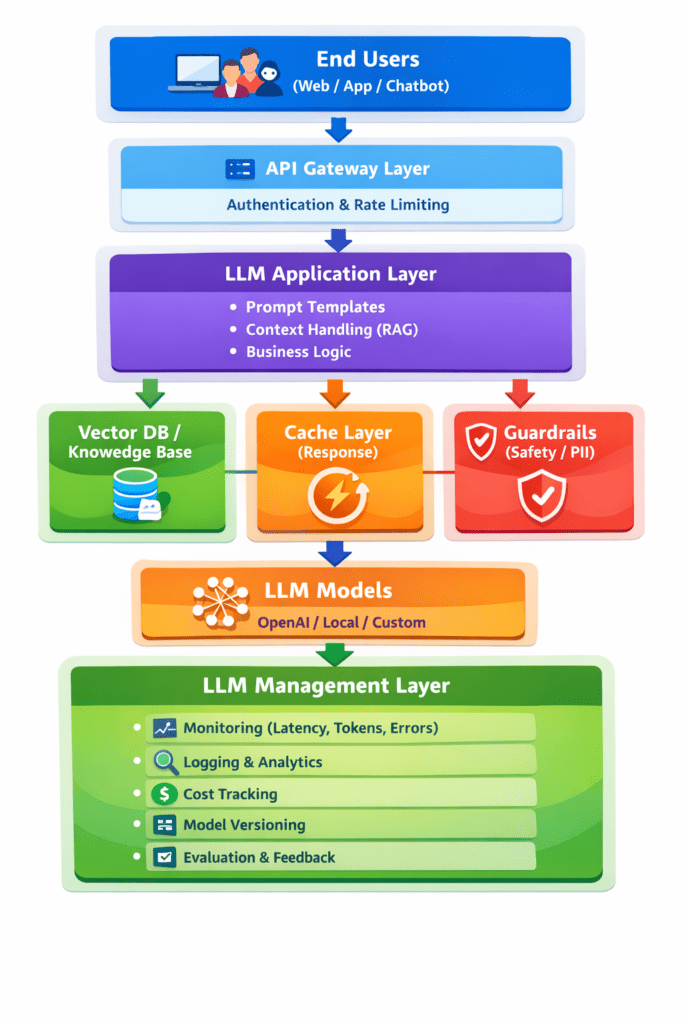

LLM Management Architecture Diagram :

Why LLM Management is Important:

Without proper management, LLM-based systems can face several issues:

- High operational costs due to excessive API usage

- Inaccurate or inconsistent responses

- Security risks and data leaks

- Poor user experience

- Difficulty in scaling applications

Effective LLM management helps organizations:

- Improve response quality

- Reduce costs

- Maintain compliance

- Scale AI systems confidently

Key Components of LLM Management:

1. Model Selection and Versioning

Choosing the right model based on:

- Accuracy requirements

- Speed and latency

- Cost considerations

- Domain specialization

Version control helps track changes and roll back if performance drops.

2. Prompt Management

Prompts play a critical role in LLM performance.

Best practices:

- Create structured prompts

- Use prompt templates

- Maintain a prompt library

- Continuously test and optimize prompts

Good prompt management improves consistency and reduces errors.

3. Performance Monitoring

Track key metrics such as:

- Response accuracy

- Latency

- Token usage

- Error rates

- User feedback

Monitoring helps identify issues early and maintain system quality.

4. Cost Optimization

LLM usage can become expensive if not controlled.

Strategies include:

- Limiting token length

- Using smaller models for simple tasks

- Caching frequent responses

- Setting usage limits

Cost management is essential for scalable AI operations.

5. Security and Compliance

LLMs may process sensitive data. Proper safeguards include:

- Data encryption

- Input/output filtering

- PII detection and masking

- Access control

- Compliance with regulations (GDPR, HIPAA, etc.)

Security is a critical part of responsible AI deployment.

6. Evaluation and Testing

Regular evaluation ensures the model performs well.

Common methods:

- Benchmark testing

- Human evaluation

- A/B testing

- Automated quality checks

Continuous testing improves reliability over time.

Challenges in LLM Management:

Organizations often face:

- Model hallucinations (incorrect information)

- High infrastructure costs

- Managing multiple model versions

- Ensuring data privacy

- Maintaining consistent output quality

Addressing these challenges requires a structured management strategy.

Best Practices for Effective LLM Management:

- Start with a clear use case

- Use prompt engineering before fine-tuning

- Monitor performance continuously

- Implement guardrails and content filters

- Optimize for cost and latency

- Maintain detailed logs and analytics

- Regularly update and evaluate models

Following these practices ensures long-term success.

Tools for LLM Management:

Popular tools and platforms include:

- LangChain

- LlamaIndex

- Weights & Biases

- MLflow

- OpenAI monitoring tools

- Prompt management platforms

These tools help streamline deployment, monitoring, and optimization.

Future of LLM Management:

As AI adoption grows, LLM management will become more sophisticated with:

- Automated model optimization

- Real-time monitoring and alerts

- Advanced safety guardrails

- AI governance frameworks

- Multi-model orchestration

Organizations that invest in proper management will gain a strong competitive advantage.

Conclusion:

LLM management is essential for building reliable, scalable, and cost-effective AI applications. By focusing on monitoring, optimization, security, and continuous improvement, businesses can unlock the full potential of large language models while minimizing risks.

As AI continues to evolve, effective LLM management will be the key to successful and responsible AI deployment.