Table of Contents

ToggleIntroduction:

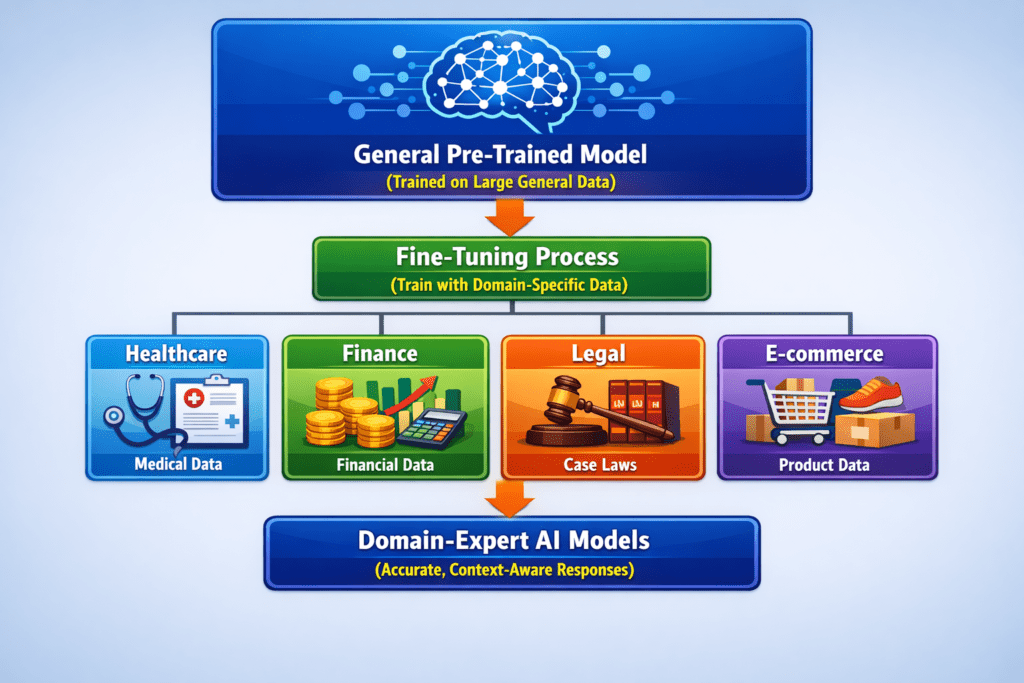

Artificial Intelligence models are usually trained on large, general datasets to understand a wide range of topics. However, in real-world applications, businesses and organizations often need models that perform well in a specific field such as healthcare, finance, law, education, or customer support. This is where fine-tuning becomes essential.

What is Fine-Tuning?

Fine-tuning is the process of taking a pre-trained model and training it further using a domain-specific dataset. Instead of building a model from scratch, fine-tuning allows the model to adapt its knowledge to a particular industry, terminology, or task.

For example:

- A general language model → understands basic English

- A fine-tuned medical model → understands clinical terms, diagnoses, and prescriptions

This approach saves time, reduces computational cost, and improves accuracy for specialized use cases.

Why Fine-Tuning is Important for Domain Applications:

- Improved Accuracy

Domain-specific data helps the model understand industry terminology and context better. - Better Context Understanding

For example, the word “charge” means different things in law, finance, and electronics. - Faster Deployment

Since the base model is already trained, only additional training is needed. - Cost Efficiency

Training from scratch requires massive data and computing power. Fine-tuning is more economical.

Examples of Domain-Specific Fine-Tuning:

Healthcare

- Medical report summarization

- Disease prediction

- Clinical chatbot assistance

Finance

- Fraud detection

- Risk analysis

- Automated financial reporting

Legal

- Contract analysis

- Case law search

- Legal document classification

Customer Support

- Automated ticket classification

- AI chatbots trained on company FAQs

- Sentiment analysis for customer feedback

How Fine-Tuning Works (Step-by-Step):

- Choose a pre-trained model (e.g., BERT, GPT, or other foundation models)

- Collect domain-specific data

- Clean and preprocess the dataset

- Train the model on the new data with a lower learning rate

- Evaluate performance using validation data

- Deploy the fine-tuned model for real-world use

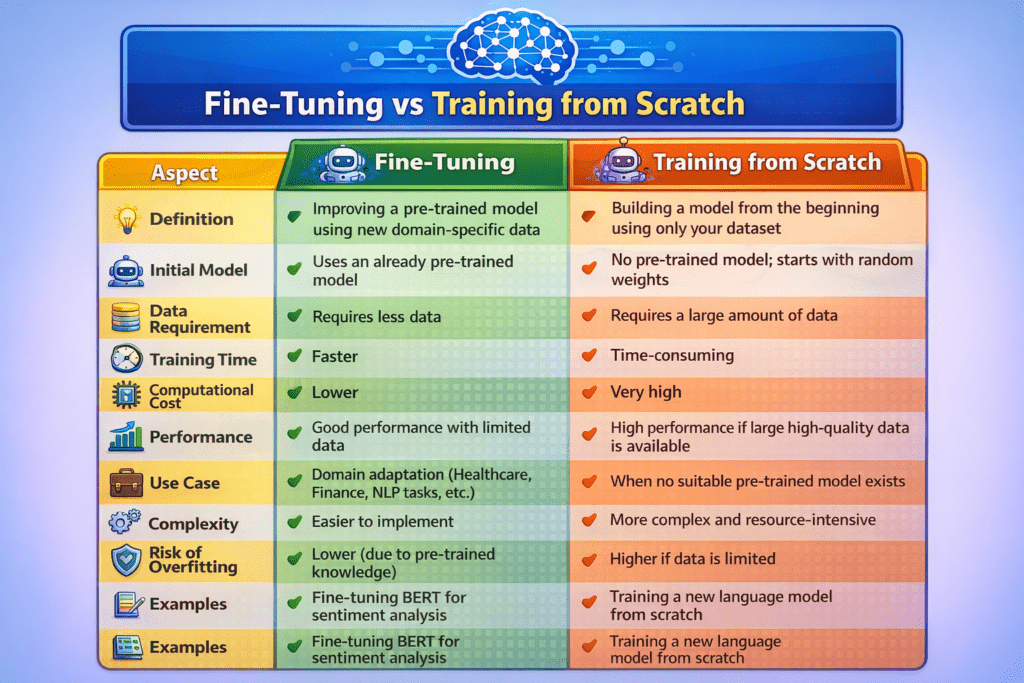

Fine-Tuning vs Training from Scratch:

| Aspect | Fine-Tuning | Training from Scratch |

|---|---|---|

| Definition | Improving a pre-trained model using new domain-specific data | Building a model from the beginning using only your dataset |

| Initial Model | Uses an already pre-trained model | No pre-trained model; starts with random weights |

| Data Requirement | Requires less data | Requires a large amount of data |

| Training Time | Faster | Time-consuming |

| Computational Cost | Lower | Very high |

| Performance | Good performance with limited data | High performance if large high-quality data is available |

| Use Case | Domain adaptation (Healthcare, Finance, NLP tasks, etc.) | When no suitable pre-trained model exists |

| Complexity | Easier to implement | More complex and resource-intensive |

| Risk of Overfitting | Lower (due to pre-trained knowledge) | Higher if data is limited |

| Examples | Fine-tuning BERT for sentiment analysis | Training a new language model from scratch |

Challenges in Domain Fine-Tuning:

Limited availability of quality domain data

Risk of overfitting if the dataset is too small

Data privacy concerns (especially in healthcare and finance)

Need for domain experts to validate results

Best Practices for Effective Fine-Tuning:

- Use high-quality and well-labeled data

- Start with a strong pre-trained foundation model

- Avoid over-training on small datasets

- Regularly evaluate performance

- Update the model periodically with new domain data

Conclusion:

Fine-tuning for specific domains enables AI models to move beyond general knowledge and deliver expert-level performance. By adapting models to industry-specific data, organizations can build smarter, more reliable, and highly relevant AI solutions. As AI adoption grows, domain-focused fine-tuning will play a key role in creating efficient and intelligent applications tailored to real-world needs